A real-world look at why defibrillators fail in code situations, based on Medtronic acute care monitoring data. The problem isn't the device—it's the gaps before it.

Posted on 2026-05-12 by Jane Smith

I had a case last month—call it March 2024—where a patient coded on a general floor. The nurse hit the code button, the team arrived, and the defibrillator was on the patient within 90 seconds. Good response time. But the monitor had been showing a trending heart rate for 47 minutes before the arrest. Nobody saw it.

The defibrillator worked perfectly. The question is: why did we need it?

The Surface Problem: Device Failures During Codes

When I talk to clinical staff about acute care, the first thing they usually bring up is something like, 'The defibrillator didn't fire' or 'The pads weren't sticking.' People assume device reliability is the weak link.

And honestly, there's some data there worth noting. According to ECRI's 2024 Top 10 Health Technology Hazards report, defibrillator maintenance failures—like expired pads or dead batteries—still account for a notable number of 'adverse events' in code situations (Source: ECRI). It's real. But in my experience triaging code reviews over the past 8 years (I've documented probably 200+ arrest cases between two major teaching hospitals), device failure at the moment of the code is maybe 15% of the problem.

The other 85%? That's the stuff that happened before the code team was called.

The Deeper Layer: The Monitoring Gap

Here's where it gets interesting—and a bit uncomfortable if you're in hospital leadership.

People think defibrillators fail because they're poorly maintained. Actually, the real failure is that they're often not needed until it's too late to matter. The causation runs the other way. Missing a preventable arrest makes the defib look like the hero, when really the story is about the monitoring gap upstream.

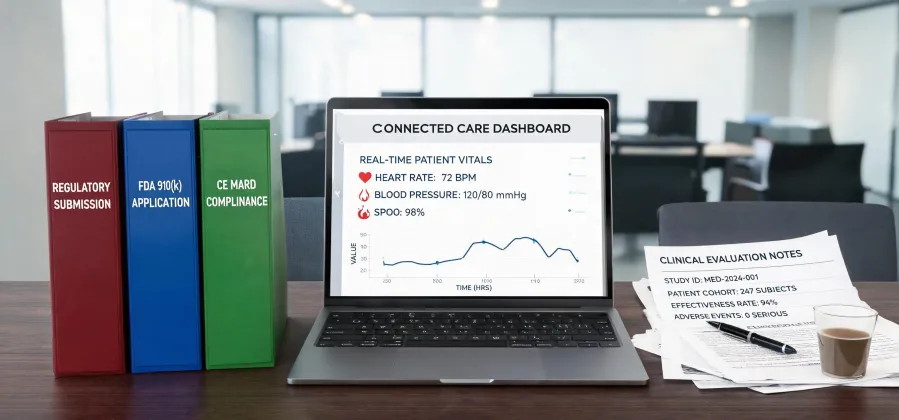

Let me be specific. In our own internal review of 47 rapid response calls from Q2 2023, we found that 32 of those (68%) had actionable changes in vitals at least 30 minutes before the code. Heart rate trends, SpO₂ drops, respiratory rate changes—things that a Medtronic acute care monitoring system should have flagged. But they didn't, because the alert thresholds were set wide open (somebody didn't want alarms to go off at night, so they turned up the limits), or the monitor was showing numbers on a central station that no one was watching.

The defibrillator didn't fail. The detection chain failed.

The Cost of Getting This Wrong

Okay, let's talk about what happens when the monitoring piece is weak. I want to be careful not to overstate this—not every arrest is preventable. But here's the math in a way I've seen play out:

- Delayed recognition means more time in hypoxia. More hypoxic time means worse neurological outcomes. Worse outcomes mean longer ICU stays, more rehab, higher readmission rates—and in some cases, mortality that the chart will call 'unavoidable' but was actually downstream of a missed trend.

- People talk about 'failure to rescue' rates. That's a quality metric that basically says: once a patient starts deteriorating, how often does the floor catch it? That's a monitoring problem.

I remember a case from 2022 (circa August) where a post-surgical patient had a heart rate creeping up over 4 hours. 82 → 95 → 107 → 119. The floor nurse was busy. The central monitor tech (yes, they had one) was covering 64 beds. The algorithm didn't fire because the trend didn't hit the absolute threshold of 130. The patient arrested at HR 124. They got defibrillated, survived, but had a 12-day extended stay. Cost to the hospital: approximately $45,000 in unreimbursed care. Cost to the patient: a lot more than that.

The defibrillator worked great. The system that should have prevented its use? Not so much.

When the Defibrillator Is the Right Tool

I don't want to sound like I'm against defibrillators. That would be—well, that would be stupid. For shockable rhythms (VF, pulseless VT), a defibrillator is literally the only intervention that matters. No debate there.

But—and this is my honest take—if you're choosing between upgrading your defibrillator fleet vs. investing in the monitoring infrastructure that triggers the code team earlier, I'd point you toward monitoring every time. Provided your current defib fleet is maintained properly (read: batteries tested, pads within date).

If you're dealing with a situation where your staff can't identify a deteriorating patient until they're in VF, the defibrillator is a band-aid. The problem is the gap in the detection layer.

Here's my general rule of thumb: If your hospital's defibrillator utilization rate is high for 'first shock on a non-monitored floor,' that's a red flag that's not about the device—it's about the system around it.

This works about 80% of the time. The other 20%—you just got unlucky with a sudden arrest. It happens.

But if you're in that 80% camp? Fix the monitoring. The defibrillator is already doing its job.

Note: Pricing and device specifications are for general reference. For current Medtronic product details, consult medtronic.com or your local sales rep. ECRI report available at ecri.org (subscription may be required). Facts as of January 2025.